Large scale analyses¶

Requirements¶

protopipe (Installation)

GRID interface (Grid environment),

be accustomed with the basic pipeline workflow (Pipeline).

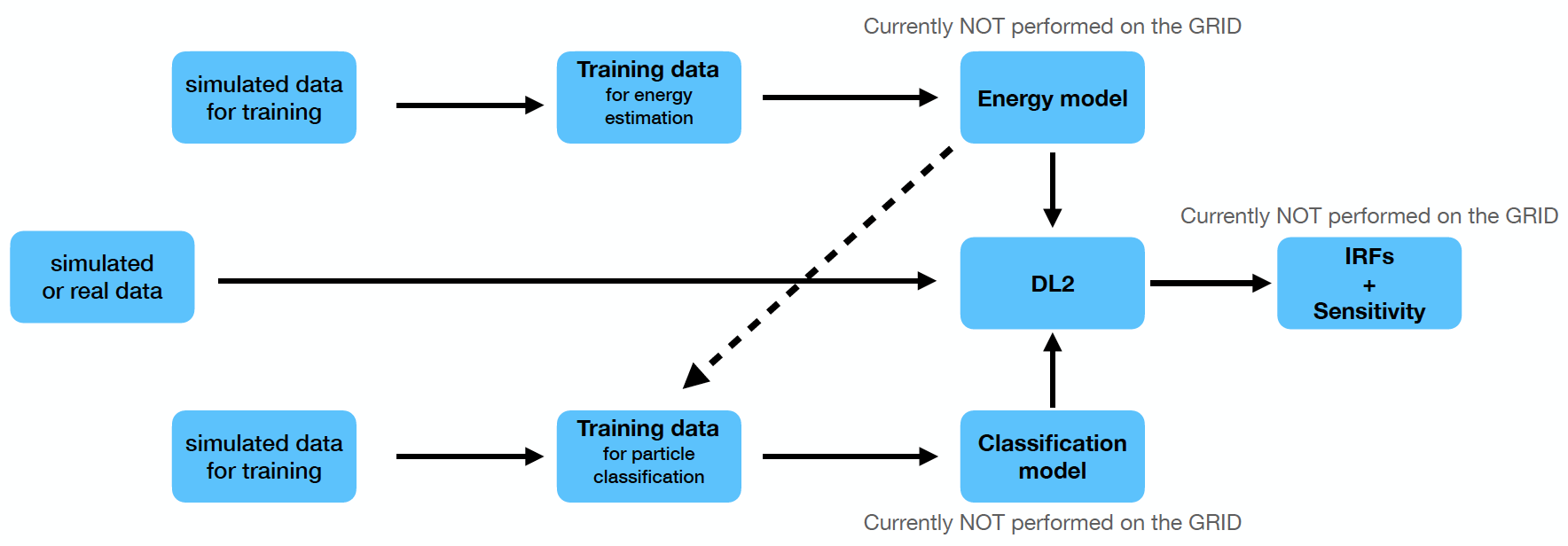

Workflow of a full analysis on the GRID with protopipe.¶

Usage¶

Note

You will work with two different virtual environments:

protopipe (Python >=3.5, conda environment)

GRID interface (Python 2.7, inside the container).

Open 1 tab for each of these environments on you terminal so you can work seamlessly between the 2.

To monitor the jobs you can use the DIRAC Web Interface

Setup analysis (GRID enviroment)

Obtain training data for energy estimation (GRID enviroment)

edit

grid.yamlto use gammas without energy estimation

python $GRID/submit_jobs.py --config_file=grid.yaml --output_type=TRAININGedit and execute

$ANALYSIS/data/download_and_merge.shonce the files are ready

Build the model for energy estimation (both enviroments)

switch to the

protopipe environmentedit

regressor.yamllaunch the

build_model.pyscript of protopipe with this configuration fileyou can operate some diagnostics with

model_diagnostic.pyusing the same configuration filediagnostic plots are stored in subfolders together with the model files

return to the

GRID environmentto edit and executeupload_models.shfrom the estimators folder

Obtain training data for particle classification (GRID enviroment)

edit

grid.yamlto use gammas with energy estimation

python $GRID/submit_jobs.py --config_file=grid.yaml --output_type=TRAININGedit and execute

$ANALYSIS/data/download_and_merge.shonce the files are readyrepeat the first 3 points for protons

Build a model for particle classification (both enviroments)

switch to the

protopipe environmentedit

classifier.yamllaunch the

build_model.pyscript of protopipe with this configuration fileyou can operate some diagnostics with

model_diagnostic.pyusing the same configuration filediagnostic plots are stored in subfolders together with the model files

return to the

GRID environmentto edit and executeupload_models.shfrom the estimators folder

Get DL2 data (GRID enviroment)

Execute points 1 and 2 for gammas, protons, and electrons separately.

python $GRID/submit_jobs.py --config_file=grid.yaml --output_type=DL2edit and execute

download_and_merge.sh

Estimate the performance (protopipe enviroment)

edit

performance.yamllaunch the performance script with this configuration file and an observation time

Troubleshooting¶

Issues with the login¶

After issuing the command ``dirac-proxy-init`` I get the message “Your host clock seems to be off by more than a minute! Thats not good. We’ll generate the proxy but please fix your system time” (or similar)

From within the Vagrant Box environment execute these commands:

systemctl status systemd-timesyncd.servicesudo systemctl restart systemd-timesyncd.servicetimedatectl

Check that,

System clock synchronized: yessystemd-timesyncd.service active: yes

After issuing the command ``dirac-proxy-init`` and typing my certificate password the process start pending and gets stuck

One possible reason might be related to your network security settings.

Some networks might require to add the option -L to dirac-proxy-init.

Issues with the download¶

After correctly editing and launching the ``download_and_merge.sh`` script I get “UTC Framework/API ERROR: Failures occurred during rm.getFile”

Something went wrong during the download phase, either because of your network connection (check for possible instabilities) or because of a problem on the server side (in which case the solution is out of your control).

The best approach is:

let the process finish and eliminate the incomplete merged file,

go to the GRID, copy the list of files and dump it into e.g.

grid.list,do the same with the local files into e.g.

local.list,do

diff <(sort local.list) <(sort grid.list),download the missing files with

dirac-dms-get-file,modify (temporarily)

download_and_merge.shby commenting the download line and execute it so you just merge them.